By clicking “Accept All Cookies”, you agree to the storing of cookies on your device to enhance site navigation, analyze site usage, and assist in our marketing efforts. View our Privacy Policy for more information.

March 31, 2026

AI tools like Claude, ChatGPT and Gemini are already part of many teams’ daily work. Support agents summarize tickets with them. Operations teams analyze logs. Marketing teams draft responses. The challenge is that these insights often stay outside the systems where work actually happens.

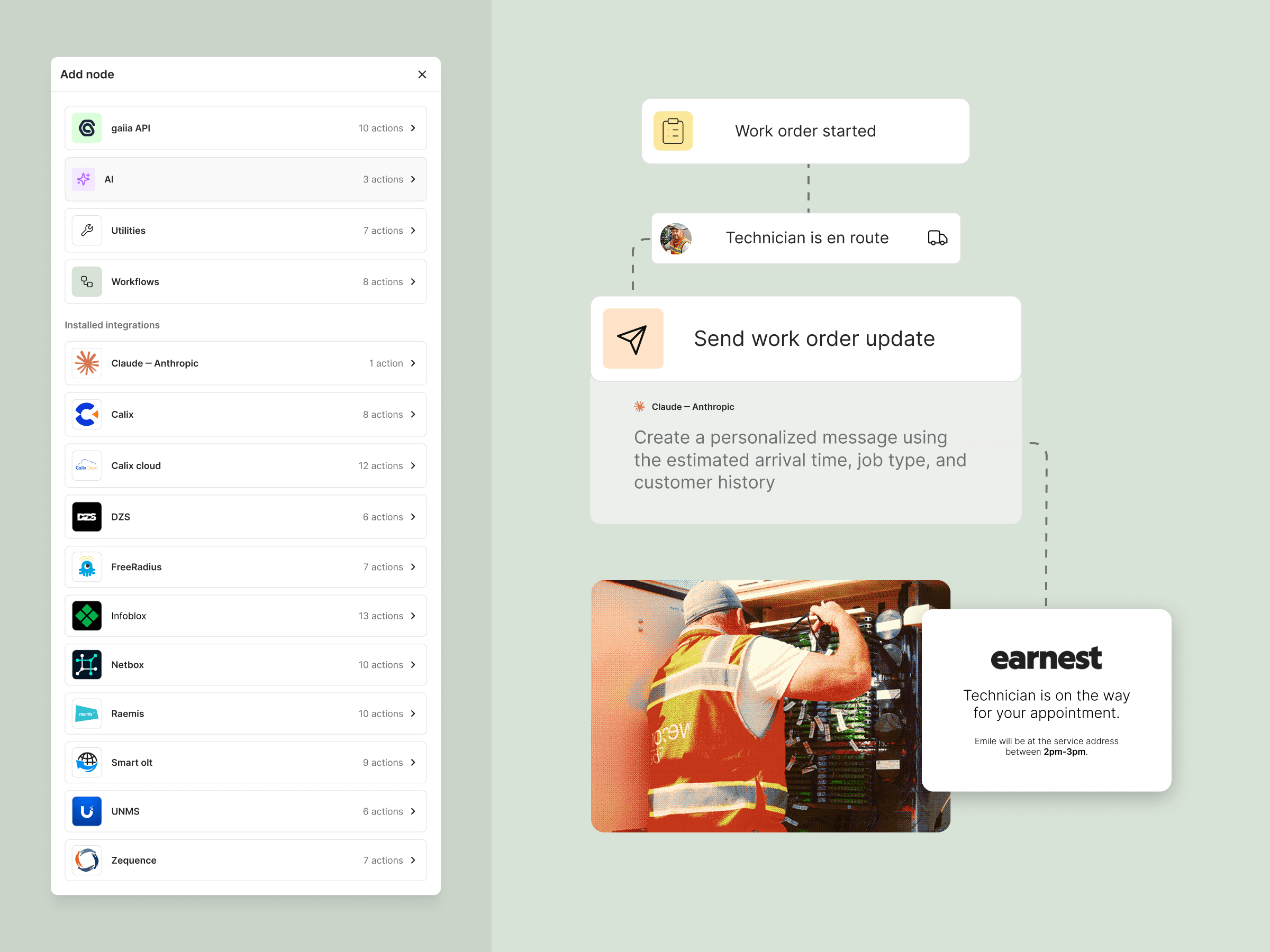

With the new AI Inference node in gaiia’s Workflow Builder, CSPs can now bring large language models directly into their operational workflows. Instead of copying information into external AI tools, workflows can now send data to a model, interpret the response, and take action automatically. No custom API integrations required.

The AI Inference node works just like any other node in the Workflow Builder. It can receive data from existing gaiia nodes and pass results to the next step in the workflow.

This means AI can now sit inside the same automations that already power provisioning, ticketing, notifications, and customer lifecycle events.

For example a workflow could:

All of this happens in a single automated flow. Instead of adding more manual review steps, workflows become capable of interpreting information and making smarter decisions.

The AI Inference node currently supports:

Operators simply connect their API key in the integrations module and select the model inside the workflow. This approach gives teams full control over:

If a team already uses Claude, ChatGPT or Gemini internally, they can now automate those same capabilities inside gaiia.

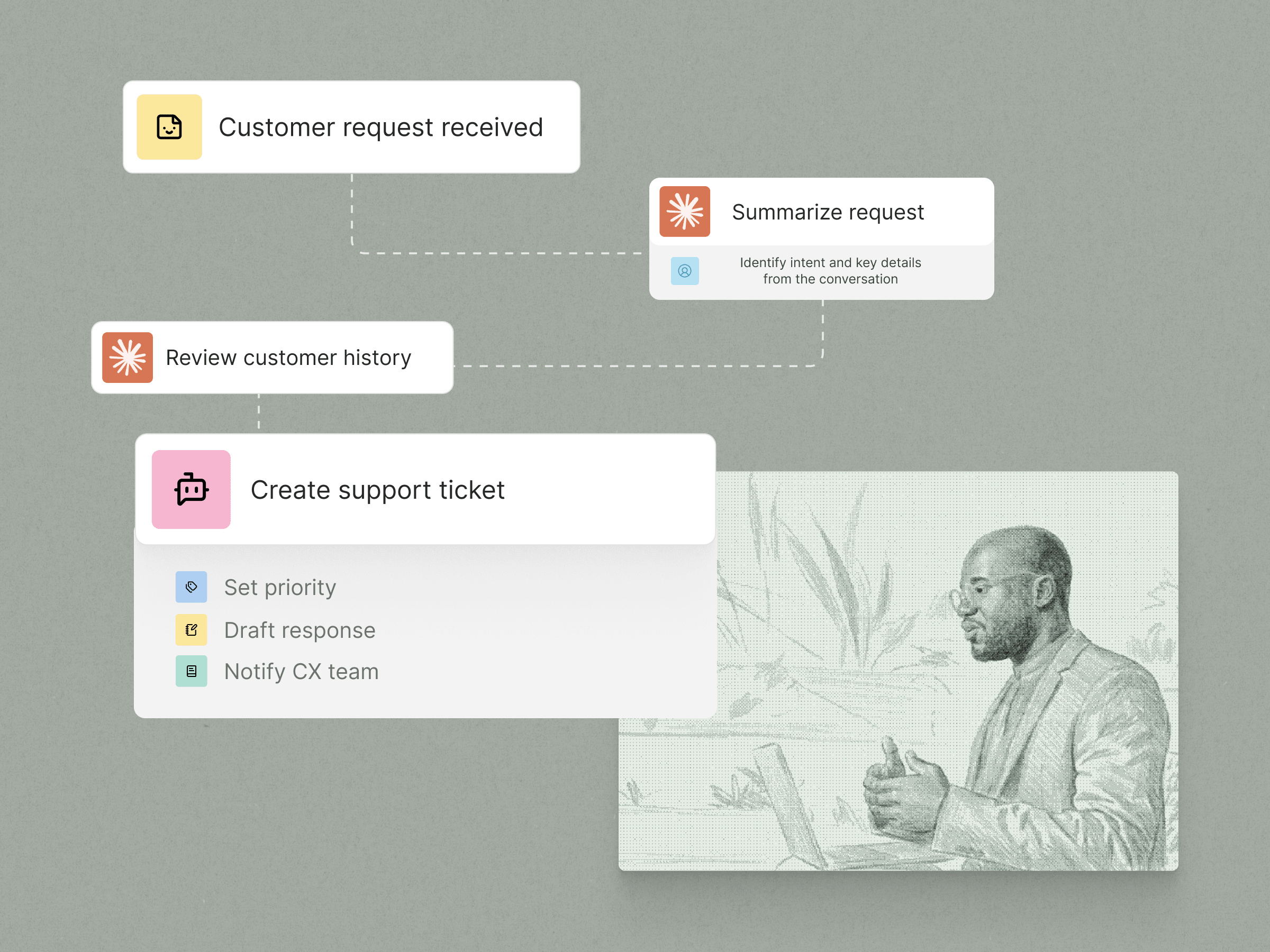

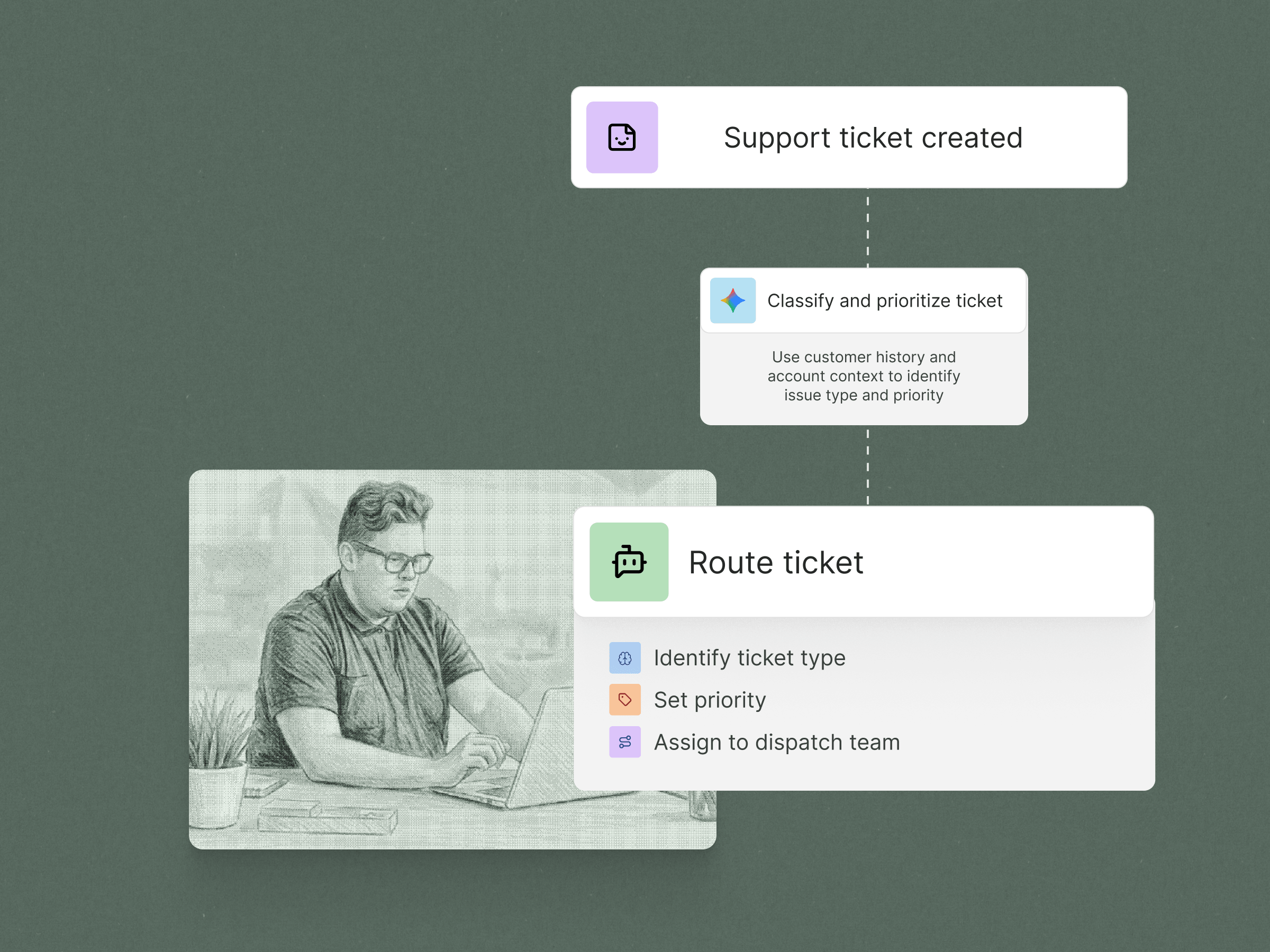

Many ISPs still route tickets manually or through simple keyword rules. This works for basic scenarios but breaks down when customer descriptions vary. With the AI node, workflows can interpret the intent of a support request and route it automatically.

Example Workflow:

Instead of relying on rigid rules, AI can understand the meaning behind customer messages and route issues more accurately. This reduces internal handoffs and speeds up resolution times.

Customer churn is often visible in interaction history long before a cancellation happens. Repeated support issues, billing disputes, or negative sentiment in conversations can all signal risk. With AI inside workflows, operators can automatically analyze customer interactions and flag accounts that may need attention.

A workflow could:

Instead of discovering churn only after a cancellation request, operators can proactively intervene when early signals appear.

The real power of this feature comes from how it integrates with gaiia’s workflow engine.

Traditional OSS/BSS platforms tend to act as systems of record. They store data but rarely enable teams to build new operational capabilities on top of it. gaiia is designed differently.

By combining automation, integrations, and now AI inside the workflow builder, gaiia becomes a system of action. Operators are not just managing records. They are building automated processes that improve how the business runs.

March 31, 2026